|

But, that extra work can be worth the effort, because when done right, parallel execution increases the overall throughput of a program enabling us to break down large tasks to accomplish them faster, or to accomplish more tasks in a given amount of time. Those coordination challenges are part of what make writing parallel programs harder than simple sequential programs.

And, there might be times when one of us has to wait for the other cook to finish a certain step before we continue on. We have to spend extra effort to communicate with each other to coordinate our actions. Adding a second cook in the kitchen doesn't necessarily mean we'll make the salad twice as fast, because having extra cooks in the kitchen adds complexity. By working together in parallel, it only took us two minutes to make the salad which is faster than the three minutes it took Barron to do it alone. So we had to coordinate with each other for that step. That final step of adding dressing was dependent on all of the previous steps being done. While I was slicing cucumbers and onions, Barron was chopping lettuce and tomatoes. Working together, we broke the recipe into independent parts that can be executed simultaneously by different processors. And when I'm done chopping lettuce, I'll slice the tomatoes. While I chop the lettuce, - I'll slice the cucumber. Now that we can break down the salad recipe and execute some of those steps in parallel. Two cooks in the kitchen represent a system with multiple processors. That's my personal speed record, and I can't make a salad any faster than that without help.

Each step takes some amount of time and in total, it takes me about three minutes to execute this program and make a salad. I'll slice, and chop ingredients as fast as I can, but there's a limit to how quickly I can complete all of those tasks by myself.

The time it takes for a sequential program to run is limited by the speed of the processor and how fast it can execute that series of instructions. This type of serial or sequential programming is how software has traditionally been written, and it's how new programmers are usually taught to code, because it's easy to understand, but it has its limitations. And I can only execute one instruction at any given moment. The program is broken down into a sequence of discreet instructions that I execute one after another. As a single cook working alone in the kitchen, I'm a single processor executing this program in a sequential manner. I'll try not to cry while I slice the onion. Next, I'll slice and add a few chunks of tomato. Then I'll slice up a cucumber and add it. So, to execute the program or recipe to make a salad, I'll start by chopping some lettuce and putting it on a place. Like a computer, I simply follow those instructions to execute the program. A computer program is just a list of instructions that tells a computer what to do like the steps in a recipe that tell me what to do when I'm cooking.

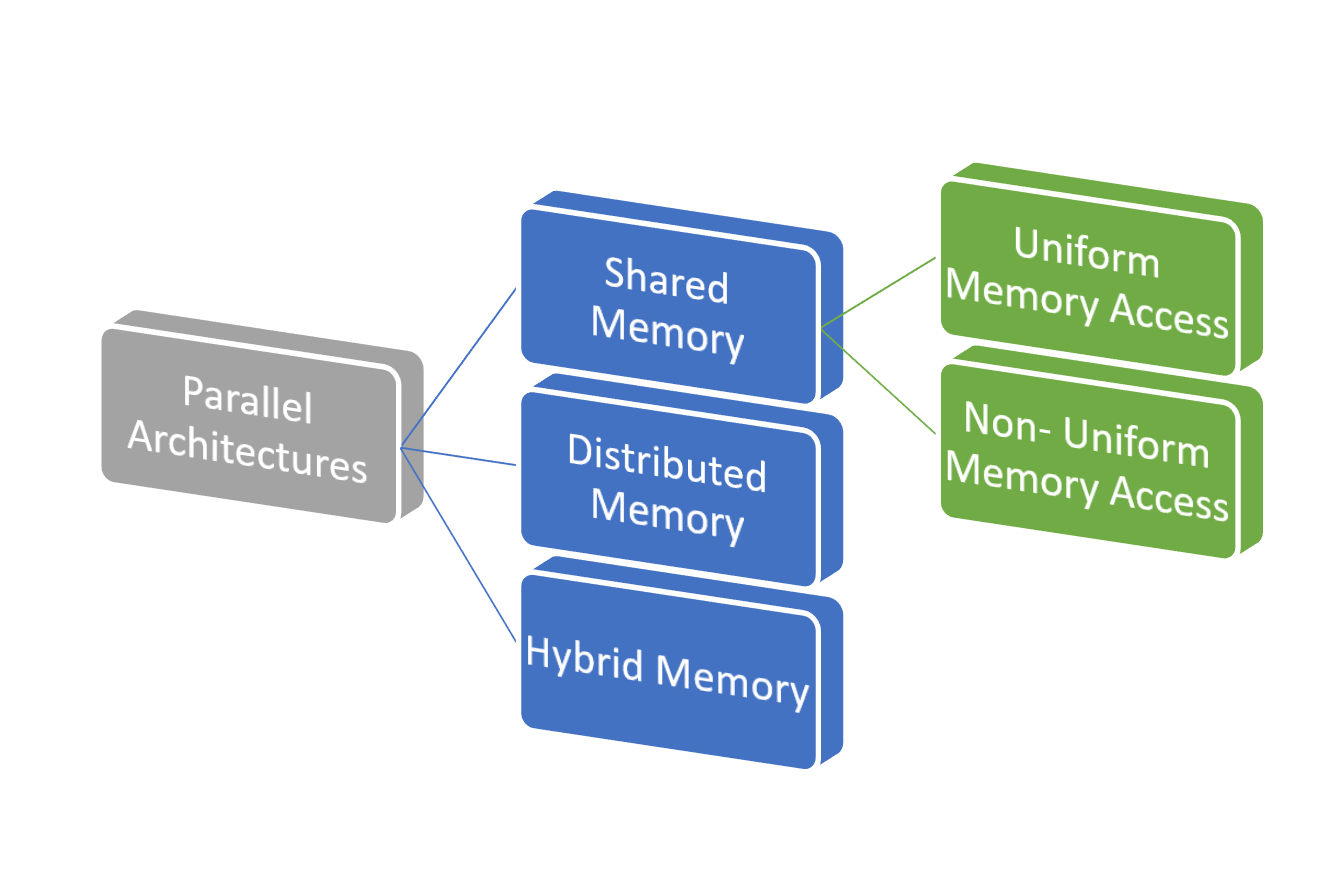

Why it's worth the extra effort to write parallel code. It turns out to be way slower than the F/J implementation.- Let's start by looking at what parallel computing means and why it's useful. But the results are the same.īTW, I wanted to compare it with the similar implementation with Fork/Join. To see if the access to the shared Atomic variables can cause this issue, I commented out the three lines with the comment "see text", and looked at the system monitor to see how long the execution takes. But still it must be the same number also if I set nThreads to 1. It might be related to the fact that the size of each "task" submitted to the executor service is really small. Surprisingly, it will run faster if I set the nThreads to 1. I am trying out the executor service in Java, and wrote the following code to run Fibonacci (yes, the massively recursive version, just to stress out the executor service).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed